诸怀疆 | Huaijiang Zhu

I am a founding engineer at Alquist working on multimodal reasoning for embodied agents. I received my Ph.D. from New York University, where I was advised by Prof. Ludovic Righetti at the Machines in Motion Laboratory. During my Ph.D., I interned at the Boston Dynamics RAI Institute, working with Dr. Tao Pang on contact-rich dexterous manipulation. Prior to NYU, I received my bachelor's and master's degree in Electrical Engineering from the Technical University of Munich.

selected publications

View all

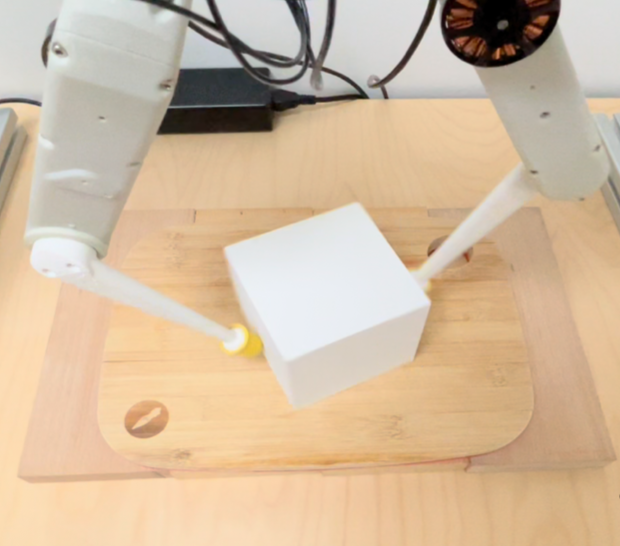

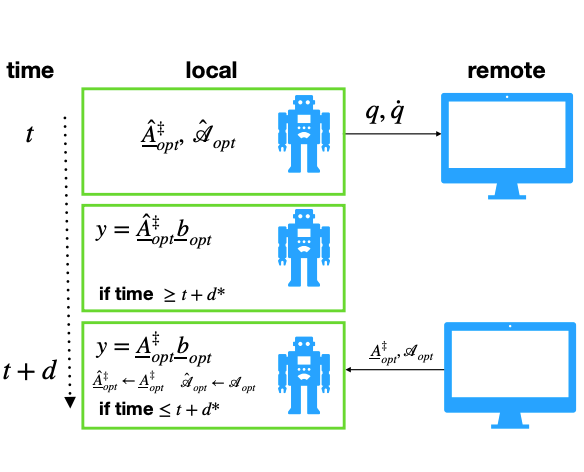

Enabling Remote Whole-Body Control with 5G Edge Computing

Huaijiang Zhu, Manali Sharma, Kai Pfeiffer, Marco Mezzavilla, Jia Shen, Sundeep Rangan, Ludovic Righetti

IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2020